月間訪問数

193.90 M

直帰率

56.27%

訪問あたりのページ数

2.71

サイト滞在時間(s)

115.91

グローバルランキング

-

国別ランキング

-

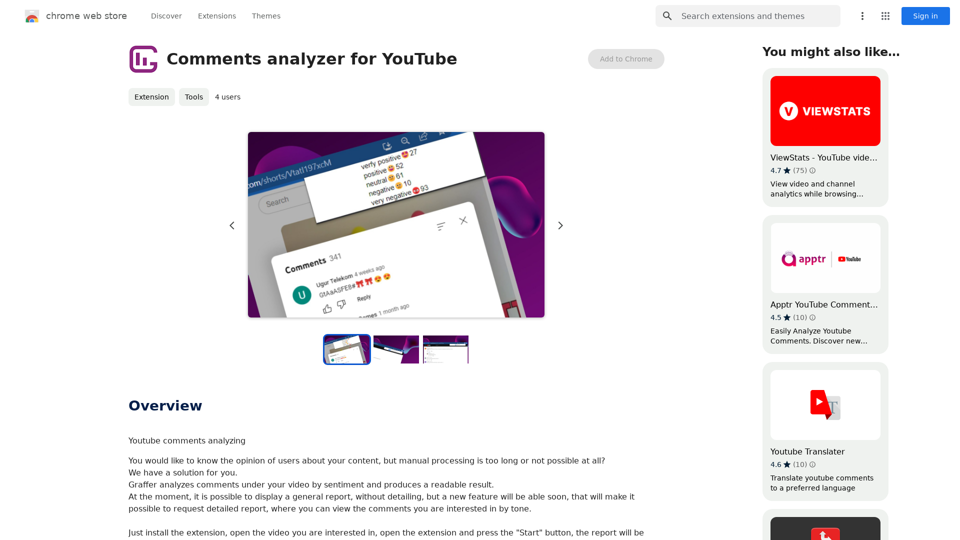

YouTubeのコメントアナライザー

YouTubeのコメント分析中

最新のトラフィック情報

最近の訪問数

トラフィック源

- ソーシャルメディア:0.48%

- 有料リファラル:0.55%

- メール:0.15%

- リファラル:12.81%

- 検索エンジン:16.21%

- ダイレクト:69.81%

トップキーワード

| キーワード | トラフィックの推定値 | 検索量 | クリック単価 |

|---|

国別ランキング

| 国 | 訪問比率 |

|---|---|

| United States | 17.22% |

| India | 9.80% |

| Russia | 7.20% |

| Brazil | 6.71% |

| Japan | 3.04% |