Reflection-70B is an advanced open-source language model designed to address the hallucination problem in AI systems. Built on the Llama-3.1 framework, it incorporates special tokens to structure the reasoning process and employs stricter control mechanisms to reduce false information generation. The model has demonstrated superior performance across various benchmarks, outperforming even some closed-source models.

Reflection-70B: Hallucination-Free AI

Reflection-70B is an advanced open-source language model that aims to address the hallucination problem in AI systems

Introduction

Feature

-

Advanced Architecture

- Built on Llama-3.1 framework

- Incorporates special tokens: <thinking>, <reflection>, and <output>

- Structures reasoning process for improved accuracy

-

Comprehensive Training

- Trained on synthetic data generated by Glaive

- Utilizes large datasets for enhanced natural language processing

-

Superior Performance

- Excels in benchmarks: MMLU, MATH, IFEval, and GSM8K

- Outperforms closed-source models like GPT-4o in several tests

-

Hallucination Reduction

- Employs stricter control mechanisms during information verification

- Significantly reduces false information generation

- Enhances user trust and reliability

-

Open-Source Availability

- Weights available on Hugging Face

- API release planned through Hyperbolic Labs for easier integration

-

Ongoing Development

- More powerful version, Reflection-405B, expected soon

- Anticipated to outperform top proprietary models significantly

How to Use?

-

Access Reflection-70B:

- Visit https://reflection70b.com

- Click the "Start" button

- Begin chatting with the model

-

Explore Benchmarks:

- Review the performance table for comparison with other models

- Focus on metrics like GPQA, MMLU, HumanEval, MATH, and GSM8K

-

Understand the Technology:

- Familiarize yourself with Reflection-Tuning technique

- Learn how special tokens structure the model's thought process

-

Stay Updated:

- Keep an eye out for the release of Reflection-405B

- Follow Hyperbolic Labs for API release information

FAQ

Q: What is Reflection-70B? A: Reflection-70B is an advanced open-source language model designed to minimize hallucinations and improve accuracy in AI-generated outputs through a technique called Reflection-Tuning.

Q: How does Reflection-Tuning work? A: Reflection-Tuning teaches the model to detect and correct its own reasoning errors by introducing special tokens like <thinking>, <reflection>, and <output> to structure its thought process.

Q: What benchmarks does Reflection-70B excel in? A: Reflection-70B has demonstrated superior performance across various benchmarks, including MMLU, MATH, IFEval, and GSM8K, outperforming even closed-source models like GPT-4o.

Q: How does Reflection-70B reduce hallucinations? A: By employing stricter control mechanisms during information verification stages, Reflection-70B significantly reduces the generation of false information, enhancing user trust and reliability.

Q: Where can I access Reflection-70B? A: The weights for Reflection-70B are available on Hugging Face, and an API is set to be released through Hyperbolic Labs for easier integration into applications.

Evaluation

-

Reflection-70B represents a significant advancement in open-source language models, particularly in addressing the critical issue of AI hallucinations. Its performance across various benchmarks is impressive, often surpassing closed-source competitors.

-

The model's architecture, incorporating special tokens for structured reasoning, is innovative and shows promise in improving AI reliability. This approach could set a new standard for transparent and trustworthy AI systems.

-

The availability of Reflection-70B as an open-source model is commendable, potentially accelerating research and development in the field of AI language models. However, the effectiveness of its implementation in real-world applications remains to be seen.

-

While the model shows impressive benchmark results, it's important to note that real-world performance may vary. More extensive testing in diverse, practical scenarios would provide a clearer picture of its capabilities and limitations.

-

The ongoing development of Reflection-405B indicates a commitment to continuous improvement. However, the AI community should remain vigilant about potential biases or limitations that may emerge as the model scales up.

-

The focus on reducing hallucinations is crucial for building trust in AI systems. However, users should still approach AI-generated content with critical thinking and not rely solely on the model's outputs without verification.

Latest Traffic Insights

Monthly Visits

0

Bounce Rate

0.00%

Pages Per Visit

0.00

Time on Site(s)

0.00

Global Rank

-

Country Rank

-

Recent Visits

Traffic Sources

- Social Media:0.00%

- Paid Referrals:0.00%

- Email:0.00%

- Referrals:0.00%

- Search Engines:0.00%

- Direct:0.00%

Related Websites

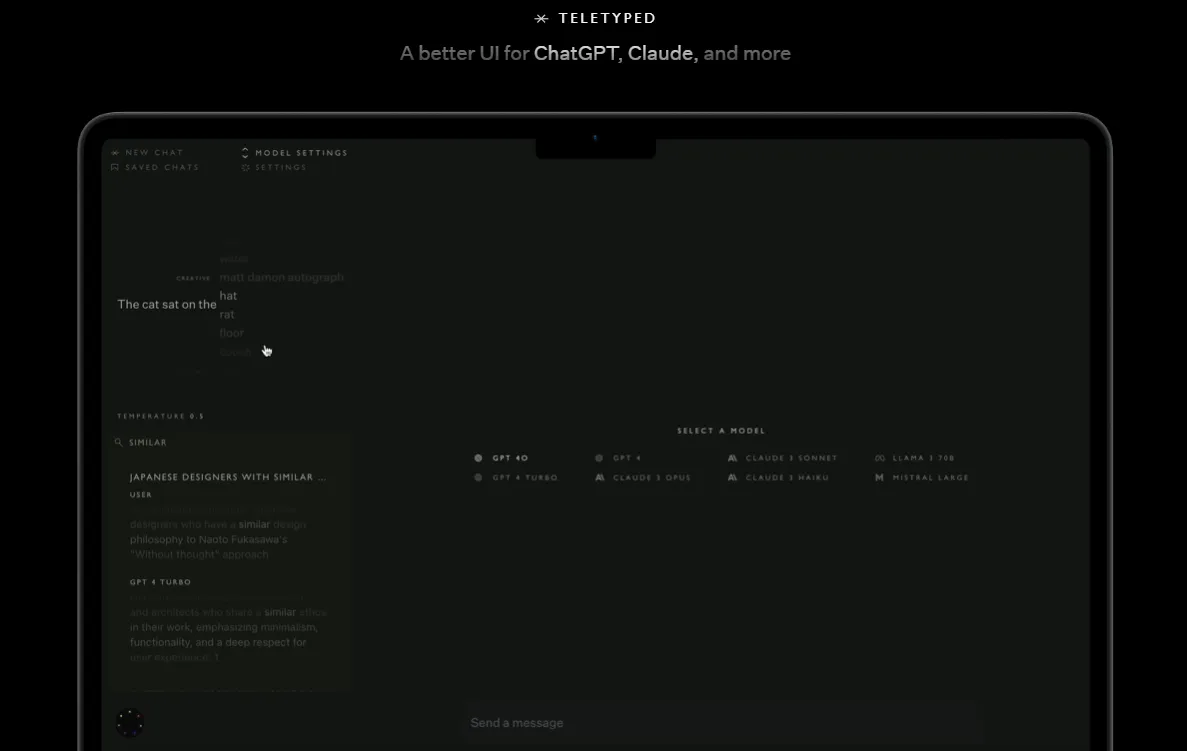

A better UI for ChatGPT, Claude, and more. Features include full-text chat search, chat saving, dynamic model switching, and editable model responses. Customize your experience with various visual themes and adjustable creativity settings.

0

character.ai | Personalized AI for every moment of your day

character.ai | Personalized AI for every moment of your dayMeet AIs that feel lifelike. Chat with anyone, anywhere, anytime. Experience the power of highly intelligent chatbots that listen to you, understand you, and remember you.

1.55 M

Poe allows you to ask questions, receive immediate responses, and engage in interactive conversations with AI. It provides access to GPT-4, gpt-3.5-turbo, Claude from Anthropic, and various other bots.

15.11 M

By listening to the live audio of your interview, AI Sensei intelligently identifies the questions posed by your interviewer and delivers structured, concise answers in real-time.

52.49 K

AI-Powered Customer Service Automation Platform | Ada

AI-Powered Customer Service Automation Platform | AdaReplace basic conversational chatbots with Ada's AI chatbot. Onboard, measure, and coach a top-performing AI Agent to resolve over 70% of customer service inquiries.

355.15 K

Best Character AI Chat Online Without Restrictions - Eros AI

Best Character AI Chat Online Without Restrictions - Eros AIJoin Eros AI and chat with AI characters, including AI girlfriends and anime girls! Create personalized connections, have conversations that feel real, and discover your perfect AI friends.

107.44 K

Maximize engagement with unified AI text and voice communication. Engage across every channel and connect with your favourite business tools. Get started free.

58.08 K