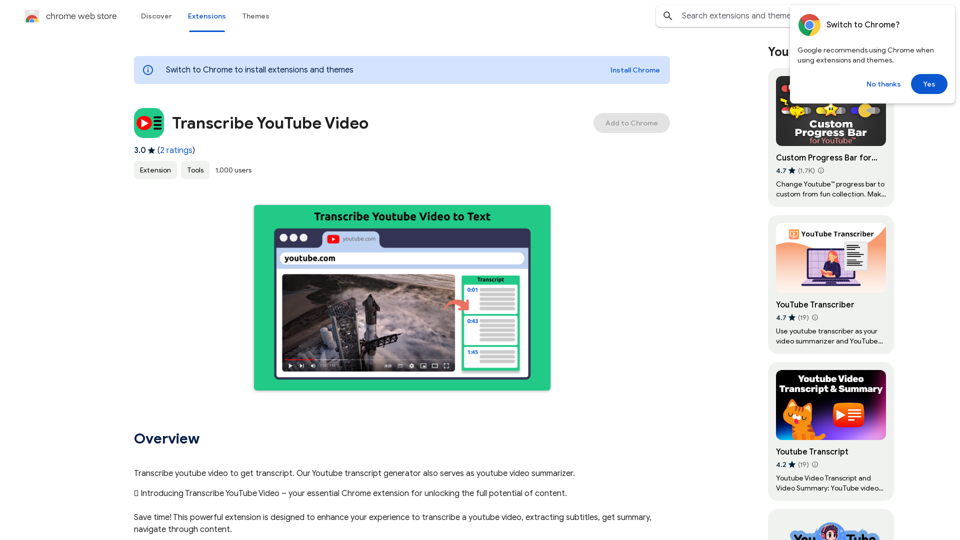

Transcribe YouTube Video is a Chrome extension that uses AI technology to transcribe and summarize YouTube videos. It offers features like multi-language support, time navigation, and transcript downloading. This tool is designed for various users, including non-native speakers, students, teachers, professionals, researchers, and content creators, aiming to enhance accessibility, save time, and improve content understanding.

Please provide me with the YouTube video link so I can transcribe it for you.

Transcribe a YouTube video to get a transcript. Our YouTube transcript generator also acts as a YouTube video summarizer.

Introduction

Feature

AI YouTube Summarizer

- Transcribe and summarize YouTube videos using AI technology

- Provide quick insights without watching the entire content

Multi-Language Support

- Transcribe and summarize videos in various languages

- Cater to a global audience

Time Navigation

- Jump directly to specific parts of the video

- Intuitive interface for easy navigation

Transcript Management

- Download transcripts for offline use

- Copy video transcript or AI summary to clipboard

- Flexible options for integrating YouTube content into projects

User-Friendly Interface

- Simple installation process via Chrome Web Store

- Easy-to-use interface on YouTube video pages

Free to Use

- Accessible to all users without cost

- Requires funding for server and AI resources

Privacy Protection

- Ensures user data security

FAQ

How to use Transcribe YouTube Video?

- Install the extension from Chrome Web Store

- Open a YouTube video

- Access the green panel for full transcript, AI summary, and timeline navigation

- Download or copy the text as needed

Is the app free?

The extension is free to use but requires funding for server and AI resources.

Can it transcribe videos in any language?

Yes, users can choose their preferred language for transcription and summarization. A default language option is also available.

How does it work?

The extension activates by clicking its button on any YouTube video page.

Who can benefit from this tool?

- Non-native speakers: Improve language skills

- Students: Enhance learning and note-taking

- Teachers: Improve teaching methodologies

- Professionals: Save time on research and analysis

- Researchers: Quickly gather information from YouTube

- Content creators: Improve accessibility and engagement

Related Websites

Create high-quality books faster and more cost-effectively than ever, with the world’s first AI designed exclusively for digital publishers.

5.18 K

ChatTTS is a voice generation model on GitHub at 2noise/chattts. Chat TTS is specifically designed for conversational scenarios. It is ideal for applications such as dialogue tasks for large language model assistants, as well as conversational audio and video introductions. The model supports both Chinese and English, demonstrating high quality and naturalness in speech synthesis. This level of performance is achieved through training on approximately 100,000 hours of Chinese and English data. Additionally, the project team plans to open-source a basic model trained with 40,000 hours of data, which will aid the academic and developer communities in further research and development.

23.26 K

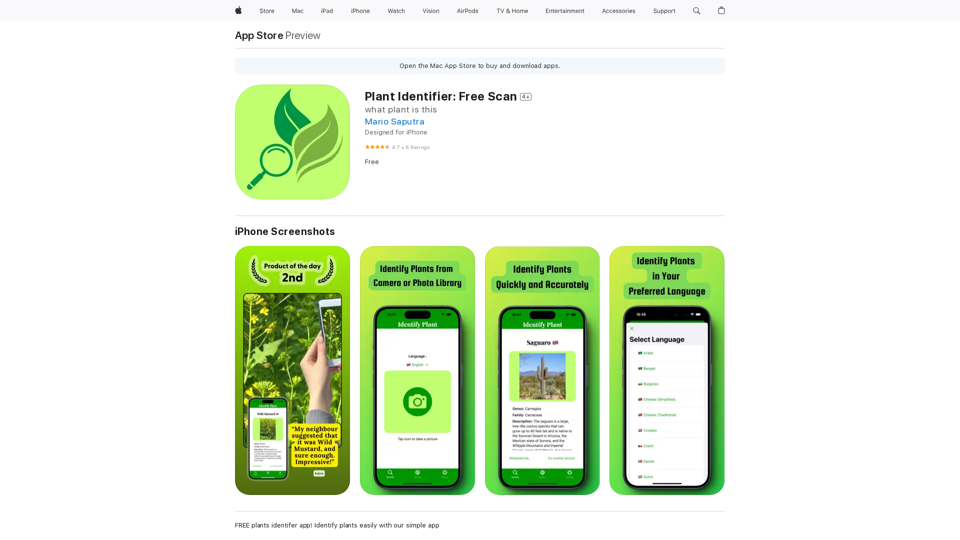

Use your camera or a picture to quickly identify plants with AI. Perfect for gardeners, nature lovers, and anyone curious about the plants around them. Features: * Instantly identify plants using AI-powered image recognition technology * Browse a vast database of plants from around the world * Learn about plant care, habitat, and other interesting facts * Snap a photo or upload an image to identify plants in seconds * Explore plant families, genera, and species to expand your knowledge * Create a personalized plant journal to track your discoveries

124.77 M